Look who’s talking: new AI voice filters pose a tremendous concern

Image via Murf.ai

Your voice or mine? Through a process called deep learning, text can be converted into speech, making it simple to clone anyone’s voice at any time. The world of Artificial Intelligence is progressing far too quickly for our human minds to keep up with.

Take a trip onto social media and be greeted with a whole new side of technology taking over the minds of our youth. New voice filters include 20 different languages, and are used to create false audios that are identical to someone’s voice. The feature is available for free on the internet, and could essentially be used like a life-altering game.

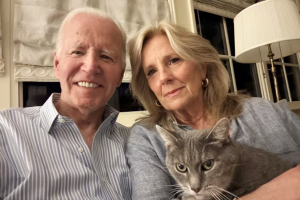

Although these new advances allow for tremendous entertainment, like the ability to make Barack Obama sing your favorite song on command, there are also detrimental consequences. Coming across a video of someone using vulgar language or saying something out of character would create confusion and make it difficult to know what is real or fake. On apps like Tik Tok, where impressionable kids come across hundreds of new micro-celebrity faces per day, hearing their voice being played by a robot wouldn’t come across as fake or suspicious. This could lead to careers being tampered with or even ruined. An example of this is deceptively realistic videos of presidential candidates saying completely ridiculous and offensive things. The videos are so realistic that they could be used as false evidence or propaganda.

This can also interfere with companies that use voice recognition for security precautions. Anyone who was previously afraid of phones stealing their information only has more to fear now. This technology could be used for scam phone calls and effectively trick someone who doesn’t know any better.

The difference between your everyday AI art pieces and this voice filter is the ability to trick people into losing money or ruining innocent people’s careers. The lack of privacy brought upon us from a young age is becoming a normality, but the ability to replicate anyone’s voice is going above and beyond. At the end of the day, this feature isn’t necessary, doesn’t need to be in the hands of the general public, and isn’t worth the dangers that it could cause.

Anna Dooley is a Senior at South Lakes High School. This is her third year writing for the newspaper, and it has become one of her favorite activities. ...